Feminism. The word irritates many because it is associated with women that are fat and ugly and can’t get a man to love them. Women against feminism seem to think that they don’t need feminism because they don’t want to play the victim or that they believe in strong men. I think the objections to feminism are often based on misconceptions that have been perpetuated by the mass media and by patriarchal institutions. People seem to bash feminism out of fear of female equality or because they don’t understand what feminism has done for them.

I really don’t get Women Against Feminism. Now, I will admit that there are parts of feminism that I don’t like, such as branches that argue that women should be superior to men and advocate violence against men. However, these minor branches of feminism are used by patriarchal institutions such as the media, branches of government, and even the food industry to be representative of feminism as a whole.

As a result, there are movements such as Women Against Feminism that seem to forget what feminism is. The most common dictionary definition of feminism is this: the desire for the social, political, and economic equality of women and men. When these women say they don’t need feminism or the West doesn’t need feminism anymore, I can’t help but think: sure they say that, but they would never give the rights they currently have now.

If you truly do not need feminism, then by your logic, you don’t need the right to vote as a woman, you should be fired from job if you get pregnant, you should not be able to get an abortion, and you should not be able to get a credit card in your name.

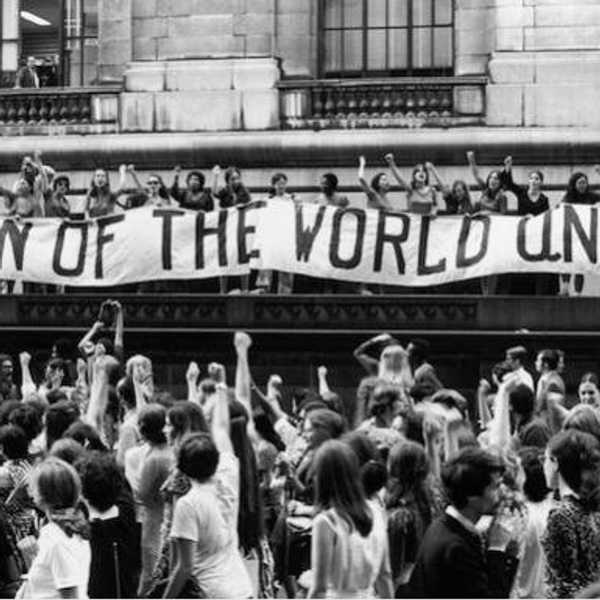

Of course, if I posed this question to an anti-feminist, they would say “No, I believe in equality, but I don’t need feminism.” But it is feminism that has allowed you to have the rights that you have today, the government didn’t just decide to grant women the right to have a credit card in their name or vote — women went out, protested, and fought for the rights that they feel they deserved. Anti-feminists benefit from the reforms that feminism has allowed to happen, and yet they refuse to acknowledge where their rights came from.

To me, this screams of selfishness and ignorance because these women actively exercise the right to vote and make blogs and YouTube videos about their views, and yet act as though feminism is the worst thing that has happened in the West. If you actively benefit from reforms that feminists fought for, you have no reason to reject feminism in its entirety. If you want to reject branches that are harmful go ahead, but not all of feminism has been harmful to women in the same way that not all men are rapists.

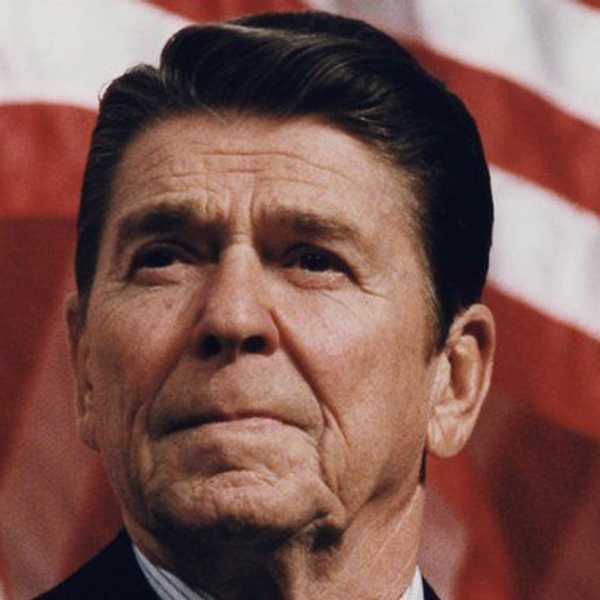

When people, especially other women say feminism is not needed in the West, they are forgetting that there are still women’s rights that are under attack by right-wing politicians such as reproductive rights and the victim-blaming culture and male entitlement surrounding rape cases. Rejecting feminism on its face means that you are capitulating power to these people to potentially take away rights that feminists fought for and rights that you actively exercise.

Yes, we still need feminism in the West and especially the United States because despite our modernization our society undervalues women and has ridiculous double standards for women when they attempt to do something that is seen as a “man’s job” such as running for president.