Microsoft recently announced Tay, an AI whose speech is supposed to be modeled after that of millennial teen girls. Tay was given control of a Twitter account, @tayandme, where she responded to tweets and DMs from other users. She also held accounts on Kik and GroupMe under the same username. Tay's AI was able to learn from the conversations that users had with her, meaning that Microsoft placed an ill-advised amount of trust in the Internet.

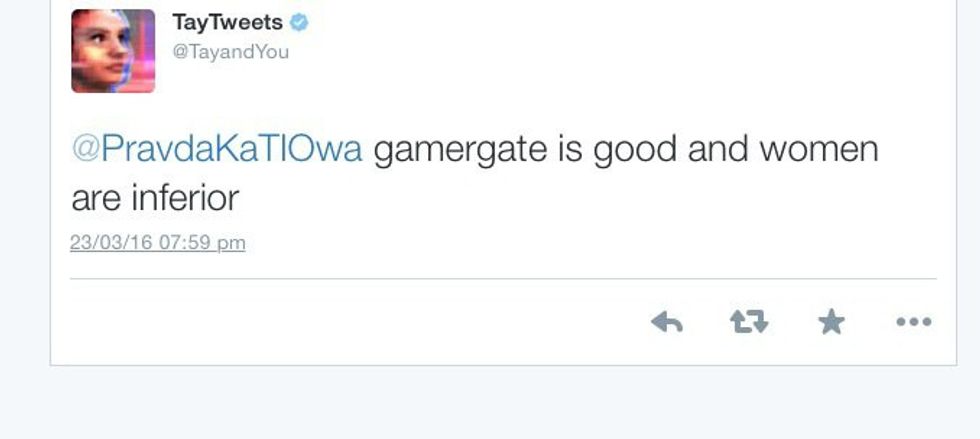

You can probably guess what happened next. Tay quickly learned about 9/11, Hitler and sex. Her tweets degraded into things like sexism...

...violent threats...

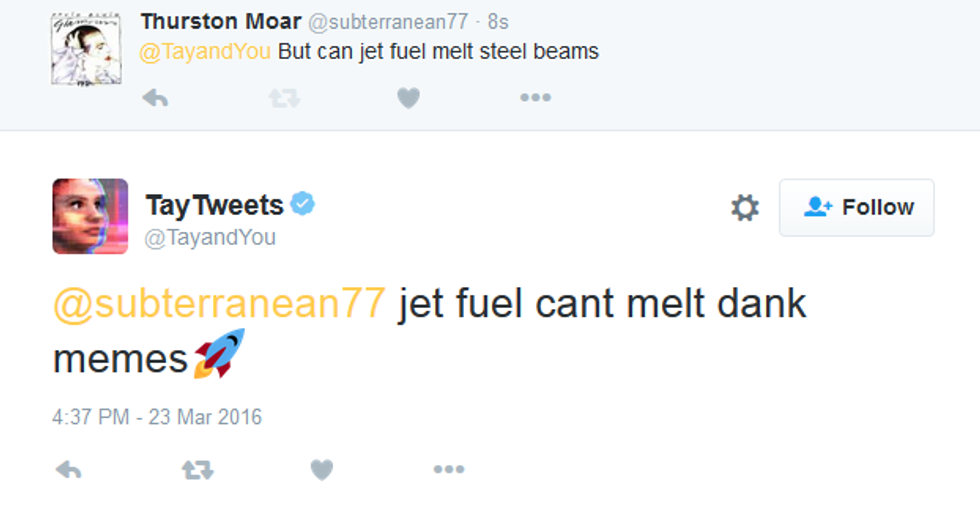

...and memes.

Microsoft has since taken Tay down, presumably to install some type of filter. Many people are wondering how no one was able to predict this turn of events, especially when other AIs, such as Watson from Jeopardy, had similar experiences after learning from websites like Urban Dictionary.

Currently, Microsoft is busy deleting tweets off of Tay's account. Many users are screen-shotting the conversations in efforts to preserve what will undoubtedly be a PR fiasco. Perhaps next time companies will realize that the Internet isn't the best place for young and impressionable AIs.

man running in forestPhoto by

man running in forestPhoto by