As the fight for gender equality becomes more and more prominent in American society, very passionate individuals and groups have begun to take their viewpoints to new extremes. Some women's rights activists have gone so far as to diminish the reputation of men in the midst of their fight for equality. Yes, I believe that men and women should be treated as equals--everyone should be treated equally regardless of race, gender, and even age--but the way to achieve this is not to attack the reputation of those you want to be equivalent to. Fight for equality with peace and respect, with reverence and understanding, not negative propaganda and slander. Popular magazines like Cosmopolitan have recently been publishing and promoting articles with titles like "21 Things That Men Don't Realize They Suck At," "12 Things That All Women Hate About Men," "How Men Are Like Babies," and much more. All of these articles are negatively directed toward men--not to mention, they're entirely unprofessional--and yet the majority of American citizens eat them up, prompting these magazines to keep cranking out similar pieces. Why would anyone write an article that focuses solely on the criticism of men? What are we hoping to achieve in this? Through this twisted ideology, women are actually working to make themselves unequal to men and rise above them on the social scale- a goal that is completely unethical and unacceptable. We take on the mentality that not only should we succeed, but others should fail. For instance, some take the issue of unequal pay and say that women should not have the same salaries as their male counterparts, but actually be payed more solely because of their gender.

Yes, I realize that men and women are not equal on all social and economic levels yet. There are still the undeniable issues of unequal pay and the overall objectification of women, but if we are striving to achieve equality, then why are we trying to depreciate those we want to be in a healthy relationship with? Neither gender is infallible, so let's stop acting like one group is better than the other, whether that egotistical assumption be based on age-old tradition or a new cultural movement. It sickens me that members of society have begun to incorporate, and even encourage, misandrous elements in mainstream American culture.

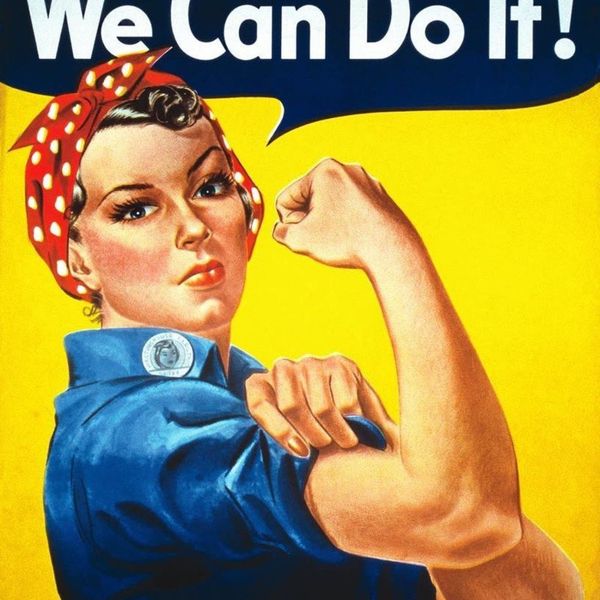

That being said, my views are not anti-feminism, nor are they sexist in any way. In fact, most realistic feminists would agree with my stance on the subject. I simply want people to be aware of the incredible hypocrisy in this fad of a social movement. Dictionary.com defines feminism as "the doctrine advocating social, political, and all other rights of women equal to those of men." Nowhere in this definition does it say that women are somehow better than men. No one has the right to claim superiority over others solely because of their appearance. As a woman, I'm all for gender equality, but under no circumstances will I participate in a cultural movement that focuses on undermining the dignity of other people.