The possibility of the extinction of Homo Sapiens is generally discussed in relation to existential threats such as climate change or asteroid impacts. It is always considered a danger and an outcome to be avoided.

But what if our extinction was part of the evolution of life and even of matter, and purposefully engineered by us?

* * *

Creating something better than us.

This sounds far-fetched, but we may first be confronted with the possibility of such a scenario within a few decades, and at least within a century. The basic idea is that we will be capable of creating a new, better species, which will gradually supplant us.

There are two manners in which this could happen: genetic engineering and artificial intelligence.

Designing a new human.

Genetic engineering is accelerating with leaps and bounds, and as the cover story of The Economist of August 22nd, “Editing Humanity,” clearly explains, the prospect of genetically engineered "designer babies" is not so far off.

Initially, genetic engineering will help us create better Homo Sapiens. But with time, as genetic engineering gains acceptance and becomes more sophisticated, the genetic changes will amount to the creation of an entirely new species of humans, one that thinks and behaves differently from us, and that develops entirely new social structures and political and economic systems.

Extinction, peaceful or not.

This scenario, in the long term, almost certainly leads to the extinction of Homo Sapiens. The new species will be superior to us; if it wasn’t, then we would not create it, or at least we would not propagate it after creation. Thus, by the laws of evolution, it would eventually replace us. However, the manner of this extinction is far from clear.

A peaceful possibility is that the process happens gradually, perhaps even without us realizing it. Homo Sapiens parents will prefer to have their children be genetically enhanced; thus, most new children will be of the new species, and very few children will be Homo Sapiens. Furthermore, the new species will almost certainly live longer, and may even be a-mortal. Thus, gradually, Homo Sapiens will be replaced by the new species.

The process could also turn violent. Homo Sapiens may realize that they have created an entirely new species and are in danger of going extinct, and seek to eliminate the new species. But once the new species is created, it would be almost impossible to completely eliminate it, especially because it will most likely still look like us. Because the new species is superior, natural evolution will assert itself, and even if Homo Sapiens do succeed initially in suppressing the new species, over the long term (that is, across centuries or even millennia), the new species will fully replace us and Homo Sapiens will go extinct.

Artificial Intelligence.

Then there is the other, far more radical, possibility: that we create Artificial Intelligence.

This scenario, too, is rarely seen as positive. Many of the world’s brightest, including Elon Musk and Stephen Hawking, have warned that AI poses an existential threat to humanity, which it most certainly does. But few stop to question whether this is necessarily a bad thing.

It certainly could be a disaster, but if we managed to create AI which is "better" than us (better being subjective, of course; more on this later), why would it be such a terrible thing if it replaced us as a species?

AI has the inherent advantage that, because it has no set physical manifestation, it can change itself easily. Who we are as Homo Sapiens is embodied in the physical world, in our bodies and brains; AI lives in virtual software. Thus, any change we make to ourselves requires a physical change, a handicap that AI does not suffer. In this context, if we suppress our emotional attachment to Homo Sapiens as a species, then a transition from Homo Sapiens to Artificial Intelligence as the primary conscious beings would be a positive development.

AI or a better us: does it matter?

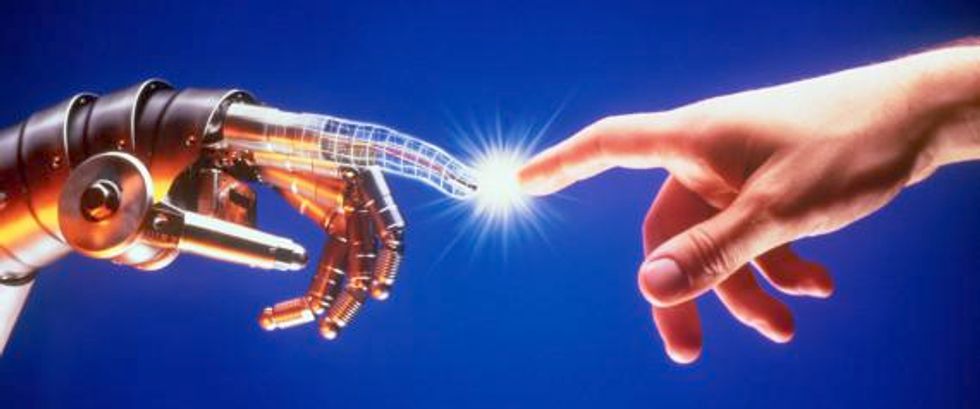

Right now, there is a clear division between humans and machines, and the two scenarios I have presented are distinct. But that may not remain so.

A concept called the Singularity states that humans and machines will soon merge and become indistinguishable. Technology will become so integrated into our bodies and minds that we will become, essentially, cyborgs. Estimates for when the Singularity will occur vary widely, but the earliest are no more than 30-40 years.

The general assumption is that when we develop AI, it will be separate from us. But what if AI becomes merely an extension and enhancement of us? What if we become partly AI, as our biological brain plays an ever smaller role in our cognition while technology plays an ever larger role?

In that case, the two scenarios I have presented become one, in which we create not only a new species, but a new type of life and consciousness, one that is neither entirely biological nor fully technological.

* * *

The next step in life? The next step in matter?

Since life first emerged, it has been reliant on chance to evolve, and has been subject to biological constraints. In fact, since the big bang, all matter has been subject to external forces beyond its control. To the best of our knowledge, matter has never had the ability to consciously shape itself.

We are on the verge of transcending that. Whether we genetically enhance ourselves, create AI, or do both in the Singularity, we, as matter, will have consciously shaped ourselves, and purposefully altered not only our physical body but our minds and the fundamental characteristics that define us. We are on the verge of one of the greatest revolutions not only of life but of matter since the big bang. It will be accompanied by our own extinction, yes, but our legacy will continue through whatever new being we create. And in the face of such an incredible achievement, does the continuation of our specific species really matter? I personally do not think so, but this is a moral issue, with no single correct answer. It is one that we will have to grapple with as a species.

The practical implications.

So what are the implications for today’s world? Does anything still matter, if our entire species is going to become extinct?

It most certainly does. We are the ones who will decide the characteristics of the new species, and our social, political, and economic systems will inform at least the first generation of the new species and have a lasting impact. Genetic engineering and the creation of AI are not linear progressions, with only one possible outcome, or with a clearly preferable outcome. It is up to us to decide on what we think is the ideal form of life and consciousness: what characteristics should it have? Are emotions necessary? Do we need genders? To what extent should technology replace biological functions? Do we still want individuals or is a single, collective entity preferable?

These questions have no clear answers, and our response to them will shape the future course not just of human history but also of life and even of the universe.

In his book A Brief History of Humankind, from where I got the basic idea of this essay, Yuval Noah Harari finishes by looking forward to the possibilities of our creating a new species and going extinct. The last sentence of the book is powerful and encapsulates perfectly the only question that really matters: “What do we want to become?”